Two Equilibria, One Dilemma

Selective transparency versus hard censorship in Singapore and China.

What I learned in my independent study on game theory for political science — on digital authoritarianism, signaling, and how different regimes shape the informational environment to change what citizens believe, what they expect from one another, and whether collective action ever becomes possible.

Introduction

I recently fell in love with game theory after being introduced to the Stag Hunt framework in my International Relations class. There was something genuinely exciting about seeing political choices through a strategic lens. Once I started thinking in terms of players, incentives, payoffs, and expectations, many political situations suddenly felt much sharper and more legible.

That curiosity eventually pushed me to write a full proposal and persuade my professor to supervise me for an independent study spanning four areas: congressional rules, deterrence, voting, and bargaining. I began with static games: the Prisoner's Dilemma, Chicken, and Stag Hunt, using them as entry points to understand the strategic logic underlying each domain. Over time, I moved to broader applications in international relations, elections, and domestic bargaining. What attracted me most, I realized, was not only the elegance of the models themselves, but the way they forced me to think more clearly about uncertainty, strategy, and the structure of political interaction.

This page is part of that study journey. I want it to work as a writing archive rather than a final polished answer: a place where I keep adding notes to each framework in the spirit of the Feynman technique. If I can explain an idea clearly in simple language, I probably understand it more honestly too. This essay is one of those attempts: a way of thinking through why authoritarian governments facing similar technological pressures can still stabilize very different strategies of information control.

Why signaling matters

Among the many frameworks in game theory, I keep returning to signaling because it feels unusually close to how politics actually works. Political actors rarely know each other's true intentions, capacities, or resolve with certainty. States signal strength. Governments signal confidence. Leaders signal commitment. Even silence can function as a strategic signal.

What I like about signaling is that it is never only about the action itself. It is also about what that action communicates and how others interpret it. That is what makes it so useful for political science. Politics is not only about force. It is also about uncertainty, perception, and belief formation. The same action can produce very different political outcomes depending on how it is read by others.

This is partly why I chose the signaling framework here. Once I started thinking about authoritarian information control, I realized censorship is not only a way to suppress information. It can also communicate confidence, insecurity, capacity, and resolve. That made the framework feel especially satisfying to me because it bridges formal theory and real political behavior in a way that is both intuitive and analytically sharp.

Main texts that shaped this study

These are the main books I chose for this independent study. I wanted texts that felt rigorous, politically grounded, and broad enough to connect formal models with real strategic behavior.

Book

Game Theory for Political Scientists

James D. Morrow

The main foundation for how I think about equilibrium, signaling, and strategic interaction in political science.

View book

Book

The Evolution of Cooperation

Robert Axelrod

A classic that made strategic interaction feel alive, iterative, and politically meaningful beyond formal abstraction.

View book

Book

Complexity and Cooperation

Robert Axelrod

A text that pushed me to think beyond neat models and toward more dynamic political systems and adaptive behavior.

View bookThe central dilemma

This piece began from a question that felt deceptively simple: if authoritarian governments confront similar technological pressures, why do they not converge on the same information-control strategy? Why does one regime rely more heavily on infrastructural closure, while another preserves partial openness and instead leans on targeted legal tools?

Digitization makes information easier to circulate, easier to coordinate around, and harder to fully contain. That creates a shared dilemma for authoritarian regimes. They want to preserve regime stability and legitimacy, but they also operate inside a world shaped by digital networks, transnational flows, and new expectations around connectivity.

The usual answer is that authoritarian regimes censor because they fear dissent. But that answer always felt incomplete to me. It describes the goal without really clarifying the mechanism. What interested me more was the strategic side of the problem: how information control changes what citizens know, what they think others know, and how those shifts alter the possibility of coordination.

What this means is that censorship is never only about suppression. It is also about managing the conditions under which people form beliefs and decide whether collective action is worth attempting. The question is not simply whether citizens are dissatisfied. It is whether they believe enough others are dissatisfied, informed, and ready to move at the same time.

The signaling model

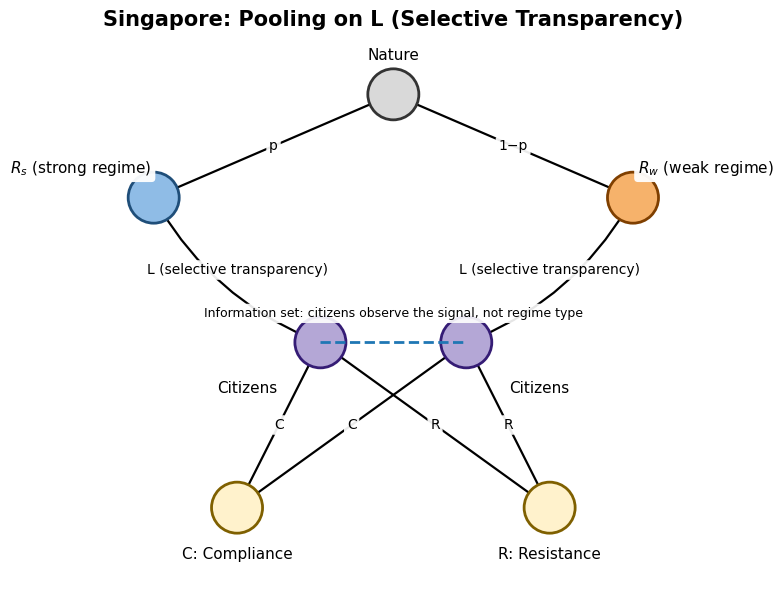

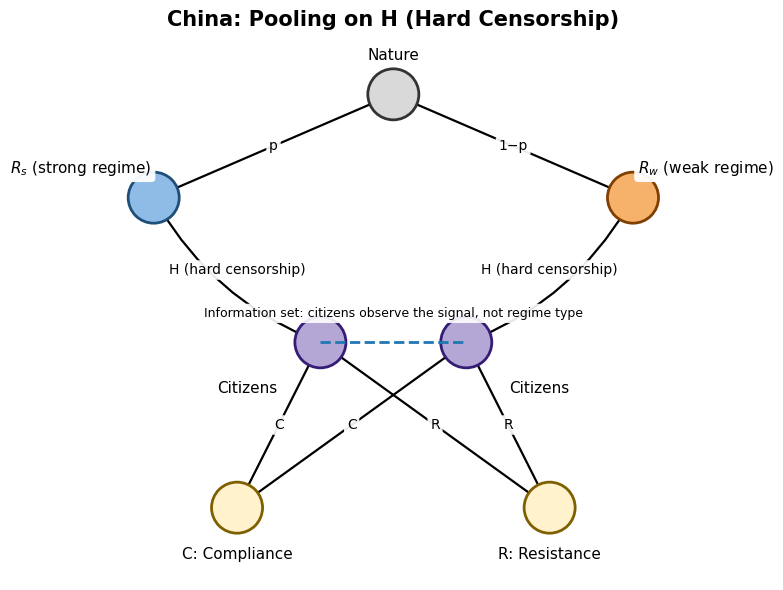

In the model I use, the regime has private information about its type or strength, while citizens only observe public choices, in this case, the style of censorship. Those choices are not neutral. They become signals that people interpret. The regime may be strong or weak, but citizens do not directly observe which one it is. They only see whether it chooses hard censorship or a more selective and limited strategy.

Once censorship is treated as a signal, authoritarian politics looks less like a one-sided optimization problem and more like a strategic game under incomplete information. Citizens update from what they see. The regime anticipates that updating. And the real outcome depends not only on what each side prefers, but on whether the informational environment makes coordinated resistance seem possible.

The intuition I find most useful is this: regimes do not only control information, they also shape the meaning of the environment citizens are operating in. Hard censorship can signal coercive strength and raise the expected cost of resistance. Selective openness can signal confidence, but it can also invite testing if citizens interpret it as vulnerability.

Two pooling equilibria

The core idea of this section is that different authoritarian regimes can stabilize similar political dilemmas through different pooling equilibria. In a pooling equilibrium, both strong and weak regimes choose the same visible strategy, so the signal itself does not fully reveal type. That makes the idea especially useful for explaining why distinct regimes can appear to settle on coherent but very different governance styles.

One equilibrium pools on hard censorship. In that world, both strong and weak regimes choose dense control because hard censorship sufficiently disrupts coordination and keeps citizens compliant. The other equilibrium pools on selective transparency. There, the regime preserves a more open informational surface, but targeted laws and credible sanctions still keep resistance unattractive.

What I like about this framework is that it shows how the same broad dilemma can generate more than one stable authoritarian strategy. It is not just a story of more repression versus less repression. It is a story about how different mixes of visibility, legal punishment, coordination risk, and state capacity can produce different strategic worlds.

It also helps explain why separating equilibria may be less stable than they first appear. One might imagine strong regimes choosing openness and weak regimes choosing harsher control, but in practice signals are often imitable. Weak regimes may mimic stronger ones if doing so buys compliance, while strong regimes may also choose hard control if openness creates too much risk of coordination. Pooling, in other words, can be more robust than neat separation.

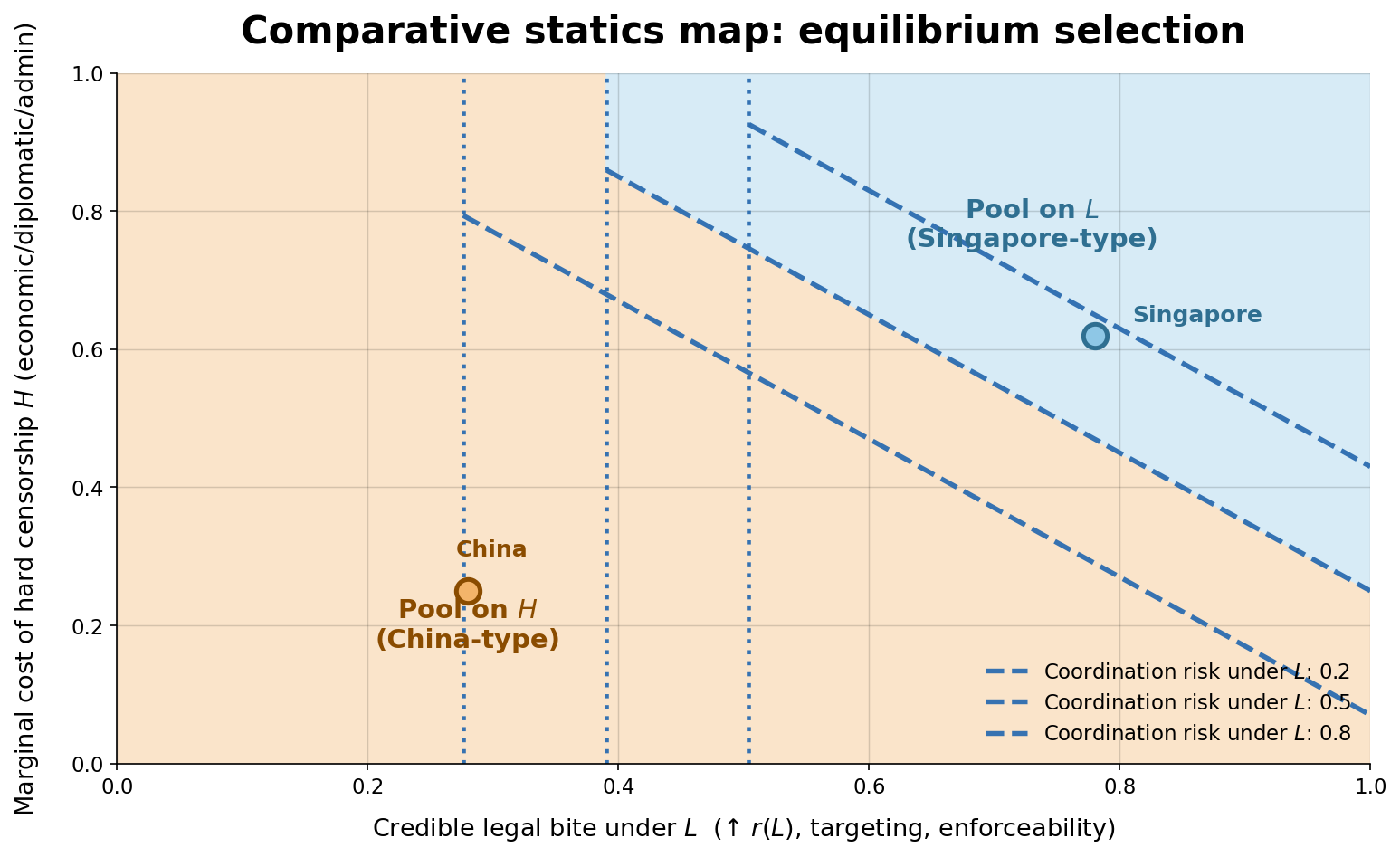

Comparative statics and equilibrium selection

What pushes the system toward one equilibrium rather than the other? The logic becomes clearer if we think about three interacting forces. First, how costly is hard censorship for the regime? Second, how effective are targeted legal tools under a more selectively open environment? Third, how easy does partial openness make it for citizens to coordinate?

If broad hard censorship is administratively expensive, economically costly, or politically burdensome, then the regime has stronger incentives to preserve some level of openness. Selective transparency becomes more attractive when targeted interventions can still keep resistance risky enough to deter collective action. But if openness sharply lowers coordination costs and makes resistance easier to organize, then the regime needs much stronger legal bite to keep that equilibrium stable.

This is one of the reasons I like the model so much. It moves the discussion away from flat country description and toward a more general strategic map. Instead of just saying Singapore and China are different, it helps explain what kinds of structural and institutional conditions make their different strategies more likely to persist.

Singapore and selective transparency

Singapore interested me because it complicates the blunt image of authoritarianism as total closure. What drew me to the case was the way it preserves a relatively open informational surface while still using targeted legal instruments to make defiance costly. In my paper, I think of this as selective transparency with legal bite

What matters here is not simply that Singapore looks softer than China. It is that the state can avoid many of the political and economic costs of blanket hard censorship while still keeping the risks of resistance high enough to deter collective action. Openness exists, but it is partial, conditional, and never free of consequence.

That is what makes Singapore so analytically useful to me. It shows that legal instruments can do some of the strategic work that we often associate with broad infrastructural censorship, allowing the regime to maintain a surface of openness while still shaping what kinds of risks citizens are willing to take.

China and hard censorship

China is the harder case in a different sense. The architecture there is not about leaving things open while raising the cost of dissent at the margins. It is about making hard control feasible at scale through infrastructure, platform governance, and centralized administrative reach.

The interesting question is not whether China is more repressive — it obviously is — but why that configuration holds together strategically. Partial openness is not just inconvenient here; it is treated as a coordination risk, something that can generate shared expectations and lower the threshold for collective action. The system is built to prevent exactly that.

What China gives you, then, as a contrast case, is not just strong censorship. It is a regime where the information environment itself becomes part of how the state manages the threat of mobilization. That is a different kind of stability than Singapore's, and that difference is the whole point of putting these two cases side by side.

What this section taught me

The biggest lesson I took from this section is that digital authoritarianism does not produce one universal playbook. Authoritarian regimes can confront a shared structural dilemma while still settling into different equilibria depending on how information control interacts with belief formation, coordination, and the costs of enforcement.

In that sense, censorship is not only repression. It is also communication. It can signal capacity, confidence, insecurity, or willingness to punish. And because citizens respond not only to constraints but also to what those constraints mean, equilibrium outcomes depend on interpretation as much as on force.

More personally, this essay is part of why I want my site to feel like a writing archive rather than a flat portfolio. Some things I make are projects. Some are notes. Some are essays that help me think. This one belongs in that last category. It also reminds me why I enjoy game theory so much in political science: it does not just give us abstract models, it gives us a way to clarify why different political worlds can emerge from the same underlying dilemma.