China's Digital Authoritarianism: A Realist Approach

Can a mastery gospel of an Orwellian state genuinely exist in the modern world where technology has reshaped every fabric of society? Using a Realist perspective, this piece argues that China's digital authoritarianism exemplifies how technology has become a sharpened sword for consolidating state power.

Introduction

There are 5.52 billion internet users worldwide as of 2024, and the average person spends over six hours online each day. Global investment in artificial intelligence surpassed $184 billion in 2024 and is projected to reach $632 billion by 2028. Digital platforms have become an indispensable part of human life.

A 2022 Pew Study demonstrated that digital technologies empower individuals by breaking down information barriers and promoting a positive impact on democracy. Yet one-third of countries in the world are now living under authoritarianism. While traditional authoritarian regimes often maintain legitimacy through patron-client networks and suppress dissent through physical means, modern autocratic regimes have expanded their control into cyberspace.

In 2023, the White House introduced the term "authoritarian counterrevolution" to describe a growing phenomenon: digital authoritarianism. It is defined as the pervasive use of technologies to surveil, repress, and manipulate populations. The Chinese Communist Party leads in developing advanced authoritarian tools, including surveillance systems, censorship architectures, facial recognition, and data-driven policing. With a staggering $1 trillion projected investment in generative AI in the coming years, it is increasingly urgent to recognize how states use AI to maintain social control and suppress dissent. Using the Realist framework, this essay argues that China's digital authoritarianism exemplifies how technology has become a sharpened instrument for consolidating state power.

Realism framework

Realism is the oldest theory in international relations, offering a pragmatic lens through which to analyze state behavior in an anarchic global system. Grounded in principles articulated by Hans Morgenthau in 1948 and later expanded by Robert Jervis, Realism emphasizes power, security, and the pursuit of national interest as the core driving forces of state action.

What drew me to applying this lens to digital authoritarianism is that it reframes what might look like domestic surveillance as a fundamentally strategic act. AI governance in authoritarian states is not primarily about technology. It is about power. The Realist framework makes that visible.

Morgenthau and the pursuit of power

In Politics among Nations, Morgenthau frames international politics as a competition for power. States act rationally to pursue their national interest, which is intrinsically tied to the accumulation and preservation of power. This explains why China's AI governance prioritizes state control over technological liberalization. AI in China is designed to serve political interests rather than market-driven growth.

China's approach reflects a deeply ingrained philosophy of centralized control. The Chinese term for artificial intelligence, 人工智能 (rén gōng zhì néng), emphasizes AI as a human-made extension ultimately serving state interests. This aligns with the ancient proverb 活到老,学到老, underscoring the CCP's belief that technologies must be shaped and controlled before they mature in ways that escape governance. China's deployment of surveillance systems and censorship tools exemplifies this mindset, consolidating domestic authority in line with Morgenthau's third principle: national interest is defined by power.

Because policy must arise from political analysis, Morgenthau insists on the need for policies to prioritize national interest. China's strict regulation of AI reflects exactly this Realist calculation. Such a strategy enables the state to maintain power and mitigate the perceived perils of advanced technology falling into ungoverned hands.

Jervis and the anarchic system

Jervis expands on classical Realism by highlighting the role of competition in an anarchic international system, where states constantly maneuver to safeguard sovereignty over global cooperation. The geopolitical implications of China's digital authoritarianism are alarming precisely through this lens. Many autocratic regimes have adopted China's AI tactics, actively challenging liberal democratic values of transparency and accountability.

Using the Realist lens, China's digital authoritarianism is not merely a domestic control mechanism. It is part of a broader strategy to assert position in the international order. Technology allows China to reinforce its sovereignty, mitigate external threats, and project global influence.

Algorithms, censorship, and the thought police

Algorithms, alongside data and computing power, form the critical triad of AI. China's action on recommendation algorithms demonstrates that pro-innovation is not a core value of its governance framework. In response to national security concerns about Western influence, China's AI governance integrates censorship and surveillance, blocking over 800 websites and automating content suppression on social media to ensure public discourse aligns with state ideology.

On social media platforms, AI algorithms function as thought police, monitoring and removing posts or accounts deemed to violate community standards. Sensitive topics include Tibetan independence, the Tiananmen massacre, and anything the CCP considers a threat to its legitimacy. China also created domestic equivalents of major Western platforms, including Baidu, WeChat, Alipay, Weibo, and Taobao, specifically to maintain control over data that would otherwise flow through foreign-owned systems.

The first emerging algorithm control appeared in 2017 with TikTok, where addictive algorithmic feeds were perceived as a threat to public discourse. In response, the Cyberspace Administration of China (CAC) released an algorithm registry requiring developers to explain the rationale behind their systems and submit them for approval. A limited version is then published publicly. This is transparency as control, not as accountability.

Generative AI and deep synthesis regulation

In 2022, China introduced the term "deep synthesis" as an alternative to deepfake, and simultaneously announced a regulatory framework to govern its use. This framework covers text, image, voice, and video generation. The 2023 generative AI regulation went further, requiring that training data ensure accuracy, objectivity, and diversity while also embodying CCP values. Content related to sex or race is restricted, and intellectual property rights for training data are mandated.

This framework places significant pressure on tech companies, requiring extensive compliance efforts to remain competitive in the rapid AI market. It also means that large language models operating in China must be ideologically aligned before they are commercially viable.

Data, surveillance, and the CCP crackdown

Data is the foundation of the AI triad. Feeding massive surveillance data into training datasets biases the algorithm. Those who control models can nudge individuals toward choices that favor their end goals. The CCP grew concerned that giant tech companies like Alibaba and ByteDance now hold more data than the government itself, creating a direct tension between private capital and state sovereignty.

In response, the CCP cracked down on prominent domestic companies including Alibaba and Ant Group. The CAC ordered major tech companies to fix recommendation algorithms creating echo chambers, required platforms to allow users to decline personalization, and mandated that qualified content reflect Socialist core values. These measures illustrate the CCP's effort to centralize data control and ensure that AI technologies remain aligned with its ideological and political objectives.

Geopolitical implications

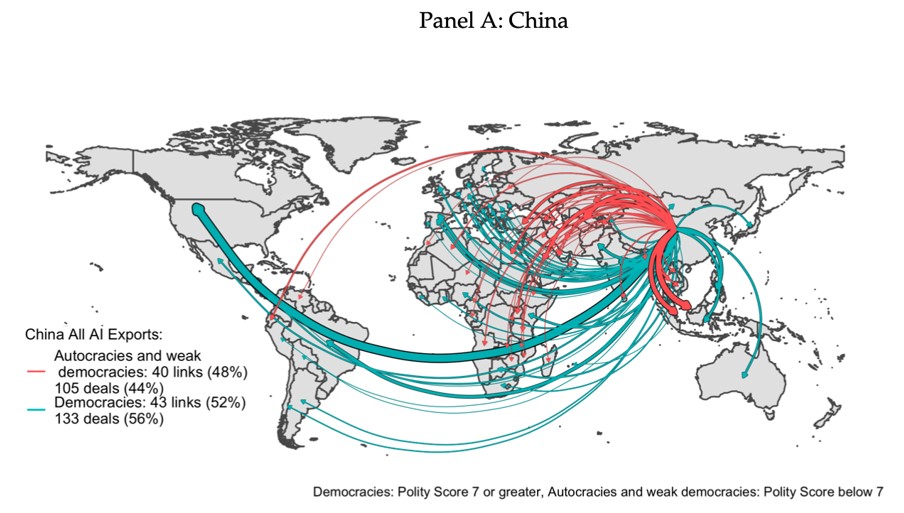

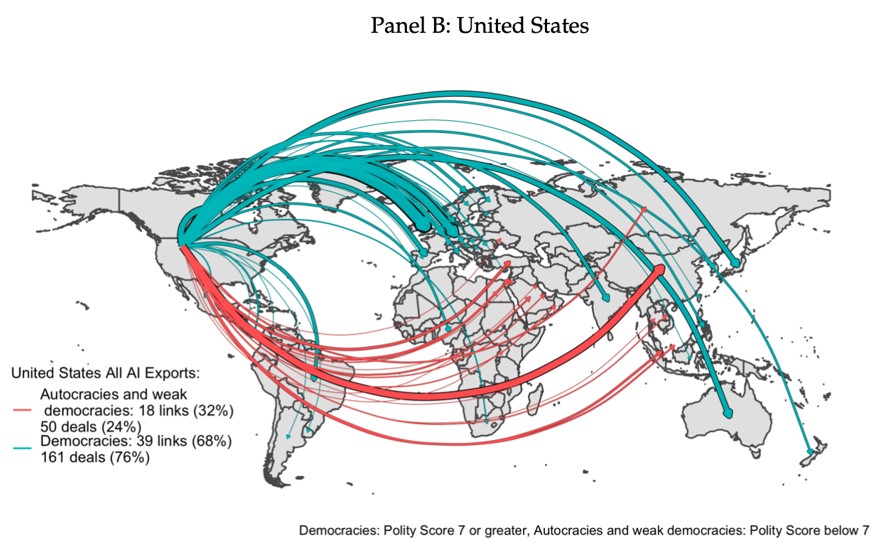

China's digital authoritarianism has significant global implications. More than half of the world's one billion surveillance cameras are from China, approximately 700 million. Chinese surveillance start-ups export their technology at roughly twice the rate of the United States. Studies reveal that facial recognition technology from China has a strong autocratic bias, with China specifically exporting to autocratic regimes or weak democracies, particularly those experiencing domestic unrest.

China exports its digital authoritarian model to many like-minded regimes, including Zimbabwe, Iran, Saudi Arabia, Sudan, Syria, and Egypt. These developing countries are more likely to import surveillance technologies under the facade of security and public order maintenance. Some tools including facial recognition, AI, and IoT technology have even been reported in democratic countries like Turkey. At least 47 governments are reported to be rigorously adopting China's tactics.

For instance, Vietnam mandated that as of December 25, 2024, only accounts verified by phone numbers can share or post content on social media. Zimbabwe citizens have experienced multiple internet shutdowns, with Facebook and WhatsApp blocked to suppress protest. Such measures reflect China's pattern of digital authoritarianism diffusing into the broader world.

Countering China's digital authoritarianism

De-risking China's authoritarian AI is challenging. Various punitive measures have been implemented to address human rights violations and curb the spread of authoritarianism. In 2022, the US Department of Commerce introduced new restrictions on China's access to advanced AI chips to curb its ability to develop AI-powered weapons of mass destruction and surveillance technology. In response, China invested heavily in domestic AI chip development to reduce long-term dependence on foreign supply chains.

In 2023, European regulators hit TikTok with a $368 million fine for violating children's privacy, and the UK's Information Commissioner's Office issued an additional $15.7 million fine for misusing children's data. Many countries have since moved toward banning TikTok entirely, though these measures do not directly curb China's export of surveillance AI to authoritarian states.

International bodies have made significant efforts to limit the risk of digital authoritarianism. The Australian Strategic Policy Institute introduced a three-step framework of auditing, red teaming, and regulation to mitigate AI-enabled harm. President Biden's 2023 Executive Order established new standards for AI safety, citizen privacy, and equity. Global frameworks like the GDPR and OECD AI Principles exemplify multilateral cooperation to uphold democratic values including transparency, accountability, fairness, and privacy.

Three global tech leaders, Google DeepMind, OpenAI, and Anthropic, have also published if-then frameworks to anticipate the potential risks of AI. For example, if a red line is crossed, a corresponding governmental action might be triggered. If a surveillance tool is biased by disproportionately targeting specific groups, the government must stop using it. Countering China's digital authoritarianism ultimately requires coordinated efforts from international policymakers, companies, and individuals.

Conclusion

Realism reminds us of a world where states prioritize sovereignty and enhance relative power. China's digital authoritarianism exemplifies the Realist perspective on international relations by exporting its governance model to reshape the international influence landscape. China's strategic use of AI demonstrates the competitive nature of states in a world where technological capabilities increasingly dictate power dynamics.

AI entrenched in authoritarian regimes creates a resilient infrastructure that is more challenging to dismantle than traditional authoritarian structures. Such development brings the Orwellian state closer to reality. International bodies must coalesce around the transparent, accountable development and adoption of explainable and responsible AI to mitigate these trajectories. As AI-powered authoritarianism grows, the international community must rethink how to counter not just China's AI policies but also the broader global spread of digital autocracy.

A closing thought

This essay was written in November 2024, early in my thinking about AI governance and digital authoritarianism. It was also where I first realized that international relations theory could give me a much sharper vocabulary for understanding why states behave the way they do around technology. Realism was the starting point. It will not be the ending one.